Trunk Merge Queue vs Mergify Merge Queue

Mergify runs a merge queue. Trunk runs one that never falls behind.

Tired of breaking main and wasting CI time? These teams were too

Last updated: April 2026

Features

Works with any CI

✅

✅

Works with any CI

Both work with any CI provider that reports status checks to GitHub - Actions, CircleCI, Buildkite, Jenkins, and more.

Flaky test protection

✅ Native to queue

❌ Not in the queue

Flaky test protection

The most common reason a merge queue stalls at scale isn't a real failure - it's a flaky test. At 50+ PRs a day, a single flaky test can stall the queue for hours and trigger a cascade of manual re-runs. Trunk identifies when a test fails speculatively but passes further back in the queue, marks it flaky, and keeps merging. Mergify has no equivalent - a flaky failure removes the PR and rebuilds the speculation chain from scratch, burning CI time and blocking throughput.

Batching

✅

✅

Batching

Both support batching. Mergify's bisect-on-failure automatically identifies the culprit PR within a failed batch. Trunk's batching integrates with parallel queue mode to reduce average queue depth.

Dynamic parallel queues

✅ Dependency-aware, automatic

❌ Manual, config required

Dynamic parallel queues

Both support running multiple queues in parallel. Trunk infers lanes dynamically from impacted targets with no configuration required - the queues reflect what's actually changing. Mergify requires manually defining scopes in a config file. In a monorepo that's moving fast, that configuration goes stale fast.

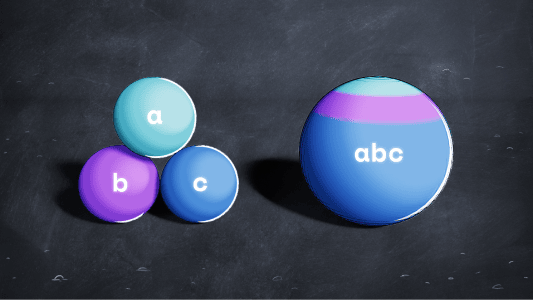

Speculative / predictive testing

✅

✅

Speculative / predictive testing

Both test the merged state ahead of time. The meaningful difference is what happens when a speculative check fails due to a flaky test: Trunk recovers automatically; Mergify restarts the affected speculation chain.

Direct merge to main

✅ Skips redundant testing

❌ Not offered

Direct merge to main

When a PR is based on the tip of main and the queue is empty, Trunk merges directly without re-running CI. Eliminates wasted compute for single PRs landing on an idle repo - a common scenario that most merge queues penalize unnecessarily.

Optimistic merging

✅ Merges when downstream check passes

❌ Not offered

Optimistic merging

If a later speculative check that includes a PR passes before the earlier ones complete, Trunk merges without waiting. Fewer CI runs, lower latency, same correctness guarantee. At high PR volume this compounds into significant throughput gains.

PR prioritization

✅

✅

PR prioritization

Both support prioritizing urgent PRs to the front of the queue.

Bazel integration

✅ Native, automatic

❌ Manual scopes only

Bazel integration

Trunk's parallel queue mode is designed for Bazel monorepos — it reads which targets a PR actually affects and only tests the relevant lanes. Mergify treats all PRs as undifferentiated without manual scope configuration, which doesn't scale in a large Bazel repo.

GitHub outage resilience

✅ Independent reconciliation layer

❌ Webhook-driven, no recovery mechanism

GitHub outage resilience

A merge queue depends on webhooks, Actions, status checks, and the merge API simultaneously - making it uniquely sensitive to GitHub instability. Trunk's queue polls GitHub's API independently of webhooks, so stuck PRs catch up automatically without human intervention.

SOC 2

✅

✅

SOC 2

Both Trunk and Mergify are SOC 2 certified.

SSO

✅

✅

SSO

Both support SAML-based SSO.

Support

✅ 24/7 on-call, included

❌ 1 business day; 24/7 add-on

Support

Trunk includes 24/7 PagerDuty on-call on Enterprise. Mergify includes 24/7 premium support on Max ($21/seat/month) and a dedicated CSM on Enterprise.

“Trunk has saved us 330 hours by preventing merge issues over the last 35 days. That’s 9.4 hours of engineering productivity saved per day.”

“It was a material sore point for us. So much so that we actually had to implement a war room to figure out how to mitigate the issues we were facing.”

Security Overview

Your code is your IP, that’s why security and privacy are core to our design. We minimize data collection, storage, and access whenever possible. We operate using the principle of least privilege at all levels of our product and processes.